What Studies Actually Say About AI Clinical Decision Support Accuracy

AI clinical decision support tools have demonstrated real capability in controlled research settings. Whether that capability translates into clinical benefit depends almost entirely on how the tool was designed — and the research is now specific enough to tell you exactly what to look for.

When an emergency physician orders a test, prescribes a medication, or rules out a diagnosis, that decision draws on years of training, accumulated clinical experience, and whatever information happens to be immediately available at the bedside. In a high-volume emergency department, that available information is often incomplete, retrieved under time pressure, and filtered through a cognitive state shaped by everything that has already happened that shift.

Clinical decision support tools exist to close that gap - to put the right evidence in front of the right clinician at the right moment. The question the research is now beginning to answer with real specificity is: how well do AI-powered CDS tools actually do that, and what separates the ones that work from the ones that don't?

This article summarizes what the current evidence says, addresses the limitations the research has honestly identified, and explains why the design architecture of a CDS tool matters as much as the underlying AI.

What Clinical Decision Support Is and Isn't

Before getting into accuracy data, it helps to be precise about what the research is actually measuring. Clinical decision support is not a single technology - it's a category that spans everything from basic drug interaction alerts built into an EHR to sophisticated AI systems that analyze patient data in real time and generate differential diagnoses, treatment recommendations, and evidence-based clinical guidance.

The distinction matters because the research on CDS accuracy is not uniform across the category. A study measuring the performance of a rule-based alert system is measuring something fundamentally different from a study evaluating an LLM-powered diagnostic assistant. Both carry the "CDS" label, but their design, their failure modes, and their clinical utility are entirely different.

In emergency medicine specifically, the most relevant CDS tools are those that function at the point of care - delivering real-time guidance during or immediately after a patient encounter, based on the specific clinical context of that encounter. Not generic knowledge retrieval. Not a search engine pointed at medical literature. A tool that takes what the physician knows about the patient in front of them and returns evidence-based recommendations calibrated to that specific situation.

That's a harder problem than it sounds, and the research reflects that difficulty with appropriate nuance.

The Promising Research Findings

The evidence base for AI-assisted clinical decision support has grown substantially in the past three years, and the findings are genuinely encouraging in several specific areas.

Diagnostic support in structured environments

A 2024 systematic review published in JMIR examining AI applications in emergency department decision-making found that AI techniques have demonstrated meaningful promise in improving diagnosis, imaging interpretation, triage, and medical decision-making within ED settings. The review identified particular strength in high-volume, pattern-recognition tasks - conditions where the AI's ability to process and cross-reference large datasets gives it a measurable advantage over unaided clinical recall.

AI performance on clinical reasoning benchmarks

A landmark randomized clinical trial published in JAMA Network Open in 2024 from Stanford produced a finding that has reframed how the field thinks about AI CDS potential. The study found that the LLM alone - without physician involvement - scored 16 percentage points higher on diagnostic reasoning cases than the physician-only control group. That result was statistically significant and suggests that the raw clinical reasoning capability of well-trained AI systems is real and meaningful, not theoretical.

Evidence synthesis at scale

One of the most consistently validated CDS applications is the ability to synthesize large bodies of peer-reviewed evidence and return relevant, sourced recommendations in real time. Where a physician consulting literature during a clinical encounter faces time constraints and cognitive load that limit the depth of that retrieval, an AI system trained on comprehensive medical literature can deliver breadth and specificity simultaneously. This is particularly relevant in emergency medicine, where encounter variety is high and the cognitive cost of staying current across all relevant clinical domains is substantial.

Where the Research Identifies Limitations, and Why They Matter

A credible summary of AI CDS accuracy has to include what the research has identified as genuine limitations - and the 2024 JAMA Network Open trial is the most important source on this point.

While the LLM alone outperformed physicians on diagnostic benchmarks, the same trial found that physicians who had access to the LLM did not perform significantly better than physicians using conventional resources without it. The difference between the LLM-assisted and control groups was two percentage points - not statistically significant. The researchers noted that this finding suggests that access alone to LLMs will not improve physician performance and that further development in human-computer interactions is needed to realize the potential of AI in clinical decision support systems.

This finding is important not because it undermines AI CDS, but because it precisely identifies where the gap lies. The issue isn't the AI's capability in isolation - the 16-point gap established that clearly. The issue is the interface between the AI's capability and the physician's clinical workflow. When that interface isn't well designed, when the tool is available but not embedded, the performance uplift disappears.

A review published in the Journal of Medical Internet Research examining AI applications in emergency medicine reinforced this point, noting that most research on AI in emergency medicine has been retrospective and has not led to applications beyond proof of concept - with the gap between demonstrated capability and real-world clinical integration remaining the primary challenge. The review called for critical appraisal of whether clinical AI solutions actually impact patient outcomes, not just benchmark performance.

Three specific limitation areas the research consistently identifies:

Hallucination risk in general-purpose models

As covered in the Annals of Emergency Medicine's review of AI's future in emergency medicine, general-purpose LLMs often generate plausible but incorrect clinical information. A 2023 Stanford study found that AI responses on clinical questions were largely unharmful but correlated poorly with expert consensus - a finding that creates a specific risk in clinical environments where a confidently wrong recommendation is more dangerous than no recommendation at all.

Performance degradation in atypical presentations

AI CDS tools trained primarily on common presentations perform less reliably on rare or atypical cases - exactly the category where clinical decision support would be most valuable. This is a known limitation that specialty-trained systems address more effectively than general-purpose tools.

Workflow integration depth

As the JAMA trial found, availability without integration produces almost no clinical benefit. This is not a research finding about AI capability - it's a finding about implementation design, and it has direct implications for how CDS tools should be evaluated and deployed.

What Separates Effective Tools from Ineffective Ones

Given the research, the evaluation question for any CDS tool isn't "does it have AI?" — it's whether the design architecture addresses the specific failure modes the studies have identified. Four variables consistently separate the tools that produce clinical benefit from those that don't.

1. Specialty-specific training data.

General-purpose LLMs trained across broad domains underperform specialty-trained systems in the specific clinical contexts where they're deployed. A CDS tool for emergency medicine should be trained on emergency medicine literature, clinical guidelines, and acute care presentations - not a general medical corpus with emergency cases included. The performance difference on atypical and complex presentations is where this distinction shows up most clearly.

2. Evidence sourcing and citation transparency.

One of the most reliable markers of a well-designed CDS tool is whether its recommendations come with sourced citations that a physician can verify. A tool that delivers a differential or a treatment recommendation without linking it to the evidence it's based on is asking the physician to trust the output without the ability to audit it. In a clinical environment where hallucination risk is real, that's not a reasonable ask.

3. Workflow integration depth, not availability.

The JAMA trial's central finding - that access without integration produces no benefit — translates directly into a design requirement. CDS tools that require a physician to leave their primary workflow to consult a separate system will not be used at the moment they're most needed. The tool needs to be present at the point of clinical decision-making, not available nearby.

4. Real-time delivery during the encounter.

Retrospective recommendations - guidance delivered after the encounter is documented - have limited clinical value in emergency medicine, where decisions are made in real time with immediate consequences. The evidence supports CDS tools that deliver guidance during the encounter, not as a post-hoc chart review.

How DocAssistant AI Was Designed Around These Findings

Most of the limitations the research identifies in AI CDS tools trace back to a common design failure: tools built for general clinical environments and applied to emergency medicine without the specialty-specific architecture the evidence says matters.

DocAssistant AI was built specifically for emergency and acute care physicians, by emergency physicians, with the research-identified failure modes as explicit design constraints rather than afterthoughts.

The clinical decision support layer is trained on over 9,000 peer-reviewed medical articles through a direct partnership with StatPearls - the largest peer-reviewed point-of-care resource in medicine, covering all 172 medical specialties with continuous updates from more than 8,000 specialty-trained authors and editors. Every recommendation DocAssistant delivers includes citations linked to StatPearls source material, which means the physician receives evidence-based guidance they can verify rather than outputs they have to take on faith. That's the direct design response to hallucination risk and citation transparency - two of the most consistently identified limitations in the research.

The platform delivers CDS in real time during the patient encounter - not as a retrospective review tool, not as a separate system to consult, but integrated into the documentation workflow itself. When a physician is capturing an encounter, DocAssistant simultaneously generates differential diagnoses, testing and treatment suggestions, and evidence-backed clinical recommendations calibrated to what's being documented. The CDS and the documentation happen in the same workflow moment, which is the design architecture the JAMA trial's findings suggest is necessary to realize the clinical benefit the research has shown AI systems are capable of delivering.

This also addresses the workflow depth variable directly. DocAssistant isn't available as an adjacent tool the physician can consult if they choose to. It's embedded in the encounter workflow, which means the guidance is present at the point of decision rather than requiring a separate cognitive step to access.

The result at Elite Hospital Partners: physicians using DocAssistant reduced charting time by 85% while capturing $399,000 per provider per year in previously lost revenue - with the CDS layer contributing to both outcomes simultaneously. More complete documentation of clinical reasoning supported higher MDM billing levels. Real-time differential support reduced the cognitive load of complex decision-making under volume pressure. The documentation and the decision support weren't separate tools producing separate benefits - they were the same platform producing compounding value.

The Honest Summary of Where AI CDS Stands

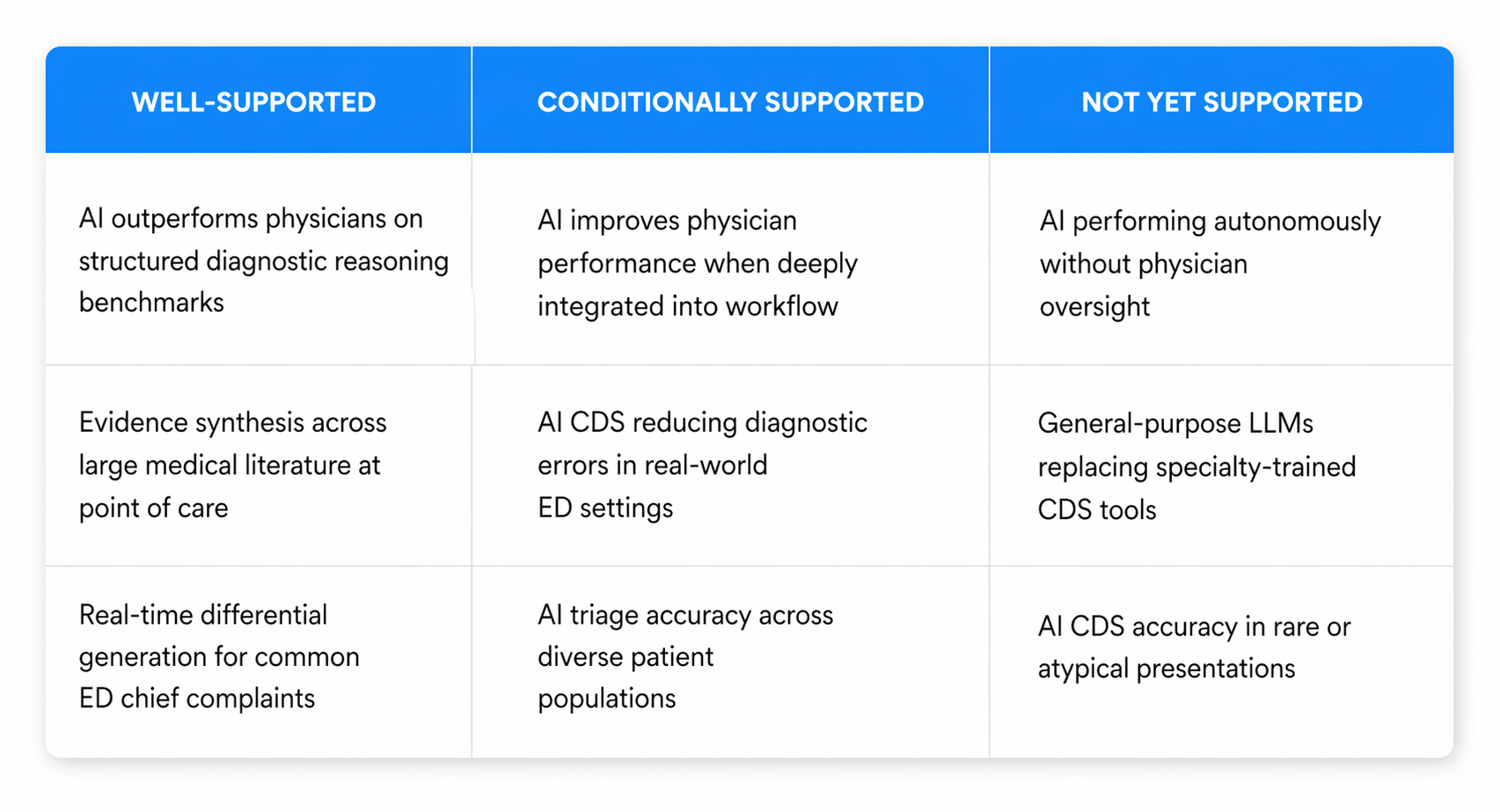

The research on AI clinical decision support accuracy tells a consistent story if you read it without confirmation bias in either direction.

AI systems, when well-designed and specialty-trained, have demonstrated genuine clinical reasoning capability that in controlled settings exceeds unaided physician performance. That's not a marketing claim - it's a finding from a randomized clinical trial published in JAMA Network Open. The capability is real.

At the same time, that capability doesn't automatically transfer into clinical benefit when a tool is simply made available. The interface between AI capability and physician workflow is the variable that determines whether the research benchmark translates into real-world outcomes. General-purpose tools applied to emergency medicine without specialty-specific training, without citation transparency, and without genuine workflow integration consistently underperform relative to their potential - and relative to the needs of the clinical environment they're supposed to serve.

The organizations and physicians that are seeing measurable benefit from AI CDS are the ones using tools designed to close both gaps simultaneously - the accuracy gap and the integration gap. That's the design standard the evidence supports, and it's the standard purpose-built emergency medicine platforms are best positioned to meet.

If you want to see how that translates in practice - including what a real-world ED implementation delivers in terms of documentation efficiency, clinical decision support quality, and revenue recovery - DocAssistant AI offers a structured pilot designed around your department's specific volume, EHR environment, and clinical workflow. No commitment required to see the data.