Auditing and Validating AI-Generated Notes in Your ED

AI documentation governance isn't about slowing down adoption. It's about making sure that when the governance question gets asked - by legal, by compliance, by a board member - your organization has a process, not just a vendor's assurance.

Emergency departments don't have the luxury of slow adoption curves. When a new tool goes live in an ED, it goes live at volume, under pressure, and immediately. That's exactly what makes AI documentation powerful in this environment - and exactly what makes the governance question feel urgent. This article is a practical framework for the clinical and compliance leaders who need to answer that question with something more than a vendor's assurance that their platform is safe.

A Question Worth Taking Seriously

Somewhere in your organization, someone has already asked it. Maybe it was legal, maybe a senior physician, maybe a board member after a meeting about technology risk. The question sounds something like this: how do we know these AI-generated notes are accurate, and what happens if one gets audited?

It's a legitimate question. It deserves a serious answer, not a reassurance.

The challenge is that most guidance on AI governance in healthcare is either too abstract to act on or written for technology teams rather than the clinical and compliance leaders who will ultimately be responsible for what ends up in the medical record. This article is written for the people who will be asked to sign off on AI documentation in an emergency department - CMOs, compliance officers, and ED medical directors - and who need a practical, defensible framework for doing so.

The goal here is not to raise alarms. The goal is to give your organization a structured way to think about AI note validation, so that the answer to the governance question is a process, not a guess.

Why AI Note Validation Is a Different Problem Than Traditional Chart Audits

Most ED compliance teams already have a chart audit process. Random sample reviews, physician-specific audits, coding accuracy checks. Those processes were built around human-generated documentation where the failure modes are well understood: a physician forgets to document a finding, a coder misinterprets a note, a procedure gets missed.

AI-generated notes introduce a different and less familiar set of failure modes. The note isn't incomplete because someone forgot. It may be confidently wrong because the AI misheard something, misinterpreted clinical context, or generated plausible-sounding language that doesn't accurately reflect what happened in the room. Research published in JAMA Network Open examining AI accuracy in clinical documentation found measurable rates of both hallucination and omission in AI-generated clinical text, with errors subtle enough to be missed by a physician scanning quickly at the end of a shift.

That's a fundamentally different audit challenge, and applying a traditional chart review lens to it misses the specific risks that AI documentation actually carries. Three failure modes in particular don't surface reliably in standard audit processes:

✦ Hallucination risk. AI models can generate plausible clinical language that was never actually said during the encounter. A traditional audit designed to catch omissions won't catch additions that shouldn't be there.

✦ Context collapse. In high-volume ED environments with back-to-back patient encounters, AI tools can occasionally blend context from one encounter into the next. This is rare, but the consequences in a medical record are serious enough to warrant a specific audit control.

✦ MDM level drift. Without a built-in billing analysis layer, AI-generated notes may systematically document at a consistent but incorrect MDM level - either overcoding or undercoding - in ways that only become visible in aggregate rather than in individual chart review. By the time a revenue cycle audit surfaces it, the pattern has been running for months.

None of these failure modes are reasons to avoid AI documentation. They are reasons to build a governance framework that accounts for the specific risk profile of AI-generated notes rather than assuming that existing audit infrastructure is sufficient.

The Regulatory Landscape

The regulatory environment around AI documentation is still evolving, and that uncertainty is part of what makes the governance question feel harder than it needs to be. Here is what the current landscape actually requires, stated plainly.

CMS and documentation responsibility

CMS does not currently have AI-specific documentation regulations. What it does have are documentation accuracy and signature standards that apply regardless of how a note was generated. According to CMS Medicare signature requirements, if you use a scribe including artificial intelligence technology, the physician must sign the entry to authenticate the documents and the care provided. The responsibility for the accuracy of every note in the medical record sits with the physician who attests it, whether that note was written by hand, dictated, human-scribed, or AI-generated. That responsibility doesn't change with the tool - it shifts the governance burden to the physician and the organization to ensure the tool produces accurate, defensible output. Organizations that treat AI documentation as someone else's problem because it came from a vendor are misreading where the regulatory accountability actually sits.

OIG audit exposure

The OIG's active work plan reflects a healthcare oversight environment that is increasingly focused on billing accuracy, coding integrity, and the use of technology in documentation. Organizations that cannot demonstrate a validation process for AI-generated notes are in a weaker audit position - not because AI documentation is inherently suspect, but because the absence of a documented governance process signals that the organization hasn't systematically addressed a known risk. Proactive compliance, including maintaining a governance framework for AI documentation, is the posture that reduces audit exposure rather than inviting it.

HIPAA and data security

Any AI scribing tool that handles protected health information must meet HIPAA security standards. SOC 2 Type 2 certification is the clearest independent validation that a vendor's data handling architecture meets those standards. It's not a HIPAA certification in and of itself, but it is the most defensible answer to a compliance team's data security questions about any AI tool that touches patient data.

The honest summary of where things stand: the organization is responsible for the accuracy of every note regardless of how it was generated, and the absence of a documented validation process is a liability that a well-designed governance framework directly addresses.

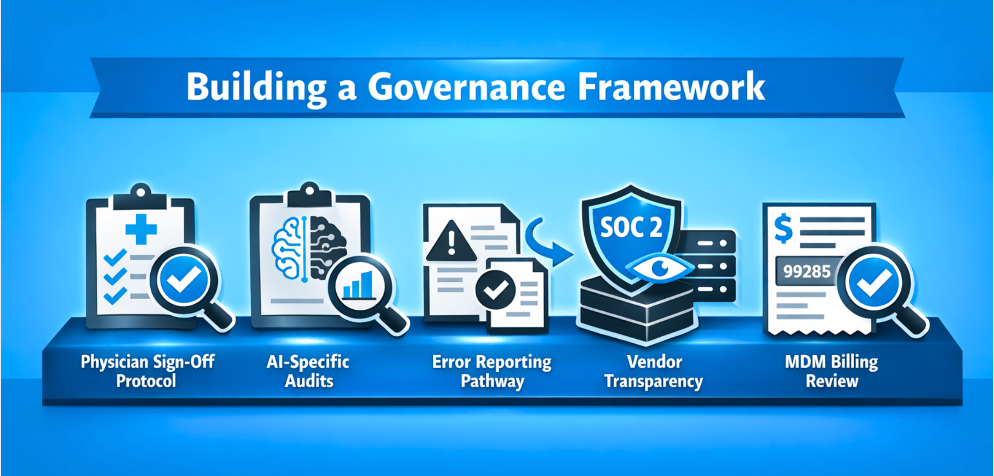

Building a Governance Framework

This is the core practical section. Five components that any emergency department deploying AI documentation should have in place - not as bureaucratic compliance overhead, but as operational infrastructure that catches problems before they become liabilities.

1. A Physician Sign-Off Protocol That Means Something

The most important governance control in any AI documentation system is physician review before attestation. But review needs to mean more than scrolling past a note and clicking sign. A meaningful protocol specifies what physicians are actively reviewing for, what the expectation is around review time, and what the process is when they identify an error. Organizations that treat sign-off as a formality are carrying governance risk they may not fully recognize until an audit surfaces it. The protocol doesn't need to be lengthy - it needs to be explicit and consistently followed.

2. A Regular AI-Specific Audit Process

Separate from standard chart audits, AI-generated notes benefit from a periodic accuracy review designed specifically for the failure modes described above. A sample of ten to fifteen notes per provider per month, reviewed against the actual encounter where possible, can surface systematic problems before they become patterns. The important distinction here is that someone in the organization owns this process, it runs on a defined schedule, and the findings feed back into both physician education and vendor communication. An audit that produces findings no one acts on is not a governance control - it's documentation theater.

3. An Error Reporting and Correction Pathway

When a physician finds an error in an AI-generated note, there needs to be a frictionless pathway to correct it and flag it for review. If correction requires navigating multiple systems or submitting a help desk ticket, physicians will stop reporting errors and start quietly working around them - which is a governance failure that looks, on the surface, like an adoption problem. The correction pathway should be built directly into the clinical workflow so that reporting an error takes less effort than ignoring one.

4. Vendor Transparency and Audit ability

The AI scribe platform your organization uses should be able to answer clearly and specifically: how does the model generate notes, what training data was it built on, how are errors handled at the platform level, and what audit logging exists within the system. If a vendor cannot answer those questions with specifics, that gap becomes your organization's governance gap. SOC 2 Type 2 certification is the baseline for vendor accountability - it means an independent auditor has verified the vendor's data handling and security practices against established standards, not just reviewed their internal documentation.

5. An MDM Billing Accuracy Review Layer

Since 2023, every ED note carries a direct billing consequence tied to its MDM documentation level. A governance framework that audits clinical accuracy without also auditing billing accuracy is incomplete. Whether that review layer lives inside the AI platform or sits alongside it as a separate process, organizations need a mechanism that validates whether documented MDM complexity aligns with the care delivered and the code submitted. This is both a compliance issue and a revenue issue, and the two are inseparable in an emergency medicine documentation context.

What This Looks Like in Practice

Governance frameworks are easiest to dismiss when they stay abstract. Here is what a functioning AI note validation workflow actually looks like in a working emergency department, shift by shift.

The AI scribe generates the note during the encounter. The physician reviews it at a natural break point - immediately post-encounter or at a brief review window before the next patient - checking specifically for clinical accuracy against their recollection of the visit, not just scanning for formatting. The platform flags any MDM level discrepancies before attestation, giving the physician a prompt to review whether the documented complexity matches the care provided. The physician signs off and the note enters the record.

On a weekly or monthly basis depending on department size, a designated reviewer pulls a sample of AI-generated notes for accuracy review against the governance checklist. Errors are logged by type - hallucination, omission, MDM drift - and patterns are identified. Systematic issues are escalated to the vendor. Findings are shared with providers at a departmental level, not as individual accountability moments but as operational feedback.

The point of laying this out is straightforward: governance doesn't have to be a separate administrative burden layered on top of clinical workflow. When it's built into the platform and the process, most of it runs in the background. The departments that experience governance as burdensome are typically the ones that implemented AI documentation first and tried to build the framework afterward.

What to Require from Your AI Scribe Vendor

Before any AI scribing platform goes live in an ED, the compliance officer and CMO should be able to get clear answers to four specific questions. These aren't technical questions - they're governance questions, and a vendor that can't answer them is telling you something important about how they think about accountability.

Does the platform hold SOC 2 Type 2 certification? This is the baseline for independent verification of data handling practices. Marketing language about security is not a substitute for a third-party audit result.

Is MDM billing analysis built into the core documentation workflow, or is it a separate add-on? A platform that transcribes accurately but doesn't analyze billing level is solving half the problem. In an emergency medicine context, where every note carries a direct coding consequence under the 2023 CMS E/M guidelines, that gap matters enormously.

What audit logging exists within the platform? The organization needs to be able to establish, for any note, when it was generated, whether it was edited, and when it was attested. That log is what makes an AI-generated note defensible in an audit scenario.

What is the vendor's process for systematic error reporting and model feedback? If the answer is a help desk ticket and a vague promise of review, the vendor has not built accountability into their process. The answer should include a defined escalation path, a documented response protocol, and a feedback loop to the model.

Choosing an AI scribe platform without asking these questions isn't a technology decision — it's a governance decision by omission. The platform becomes part of your documentation infrastructure, and its governance capabilities become your governance capabilities.

How DocAssistant AI Was Built for Governance-Ready Deployment

Most of the governance gaps organizations encounter with AI documentation tools trace back to the same root cause: platforms that were designed for documentation efficiency first and compliance architecture second - or not at all.

DocAssistant AI was built specifically for emergency medicine, which means the governance requirements of a high-volume, high-billing-complexity clinical environment were part of the design from the start, not retrofitted after the fact. That specificity maps directly to the four vendor requirements above.

SOC 2 Type 2 certification is in place. Not a claim - a verified result from an independent audit of data handling and security architecture. For compliance officers who need a defensible answer to the data security question, that certification is it.

The MDM billing analyzer is integrated into the core documentation workflow, not available as a separate module. Every chart is reviewed against the 2023 CMS E/M guidelines in real time, and undercoding is flagged before attestation. Physicians receive a prompt during their review window, not a separate system to navigate. The governance control and the clinical workflow are the same step.

The platform is EHR-agnostic. That matters for governance because it means the audit logging, the documentation infrastructure, and the compliance architecture aren't dependent on a single EHR vendor's implementation decisions. The organization retains control of its governance framework regardless of what happens to its EHR relationship.

The outcome of all of this in practice is documented at Elite Hospital Partners, where a real ED implementation - not a demo environment - produced an 85% reduction in charting time per provider and $399,000 recovered per provider per year in previously lost revenue. Those numbers came from an environment where governance requirements are real, regulatory scrutiny is real, and the documentation accuracy that drives both billing recovery and audit defensibility had to perform consistently across every shift, not just under favorable conditions. For anyone building the internal case for why governance-ready AI documentation is worth the investment, the data behind that outcome is worth a close look.

Making the Case Internally

If you're the CMO, compliance officer, or ED medical director who has worked through this framework and now needs to bring leadership, legal, or a board to the same conclusion, the argument comes down to this:

The organization is responsible for the accuracy of every note in its system regardless of how it was generated. That responsibility doesn't make AI documentation a liability - it makes the choice of platform and the design of the validation framework the critical decisions. A well-designed AI documentation platform with a functioning governance framework is not a compliance risk. It is, in many ways, more auditable and more consistent than a documentation model that depends entirely on human scribes with variable training, variable performance, and no systematic accuracy review built into the process.

The question to bring to leadership isn't whether AI documentation is safe. The question is whether the organization has the governance infrastructure to make it defensible. The five-component framework in this article is that infrastructure. It's not complex, it doesn't require new headcount, and when it's built into the right platform it runs with minimal administrative overhead. What it does require is a deliberate decision to build it before deployment rather than after.

For anyone who wants to see how other ED teams are addressing similar documentation errors and compliance challenges before they become audit findings, the patterns are more common and more preventable than most departments realize until they look closely.

Conclusion

The governance question around AI documentation - how do we know these notes are accurate, and what happens if one gets audited - has a clear answer. You know because you have a validation process that catches errors systematically and a platform whose architecture was designed to support that process. And if a note gets audited, you have a documented governance framework, a physician attestation protocol, audit logging, and independent security verification backing your position.

That's not a guarantee against every possible compliance scenario. No framework is. But it is a defensible, well-reasoned operational posture - which is exactly what clinical governance is designed to produce.

If your organization is currently deploying or evaluating AI documentation and wants to review your governance readiness, DocAssistant AI offers structured sessions for ED compliance and clinical leadership teams - a direct look at your current validation process, your MDM billing accuracy exposure, and how the platform's built-in governance architecture maps to your specific compliance requirements. No commitment required to have that conversation.

About DocAssistant

DocAssistant develops HIPAA-compliant AI documentation and medical coding solutions purpose-built for emergency medicine. Founded by practicing emergency physicians and headquartered in San Diego, California, DocAssistant combines automated clinical documentation with specialty-specific AI to reduce documentation burden, improve ICD-10 coding accuracy, and increase revenue capture for physicians, billing teams, and healthcare organizations. The company’s AI coding tool and AI scribe platform are designed to help medical billing teams, revenue cycle professionals, and clinicians work faster and document more completely. More information is available at www.docassistant.ai.

Media Contact:

DocAssistant Team

+1 619-344-0849