How Contextual Understanding Sets Next-Gen AI Scribes Apart

Generic AI scribes transcribe words. Next-gen AI scribes understand clinical context. Learn the 5 capabilities that separate surface transcription from deep contextualization.

Two AI scribes hear a physician say: "Negative troponin, but given his history, I'm still concerned." One transcribes those exact words and stops. The other understands the physician is expressing persistent cardiac concern despite normal biomarkers, recognizes this triggers higher medical decision-making complexity, and prompts for risk stratification documentation. That's the difference between surface transcription and contextual understanding.

Most AI scribes are sophisticated speech-to-text engines. They capture what physicians say with impressive accuracy—95% or higher, fast processing, clean formatting. But they miss what physicians mean. When a physician says "chest pain, EKG normal, going home," surface transcription writes exactly that and stops. It creates charts that look complete but miss critical elements: why the patient needed emergency department evaluation instead of urgent care, what cardiac risk factors were considered, what differential diagnosis guided decision-making.

Contextual understanding AI works differently. Using the same statement, it recognizes gaps immediately. Medical necessity isn't established. Cardiac risk factors aren't documented. The differential diagnosis isn't articulated. It prompts: "Document cardiac risk factors and why immediate evaluation was needed to establish medical necessity for ED-level care."

This difference determines whether AI scribes save time or protect revenue. Surface transcription creates compliant-looking charts that get denied or downcoded. Contextual understanding prevents revenue loss by catching gaps in real-time—a 30-second prompt prevents a $200 denial three weeks later.

In emergency medicine, context is everything. Patients arrive with symptoms that could have dozens of different causes. Documentation must show the thinking process, not just the final diagnosis. The same words mean different things based on patient history, symptoms, and clinical trajectory.

This article explains the five capabilities that define true contextual understanding, how to evaluate whether an AI scribe actually has them, and why this matters more in emergency medicine than any other specialty.

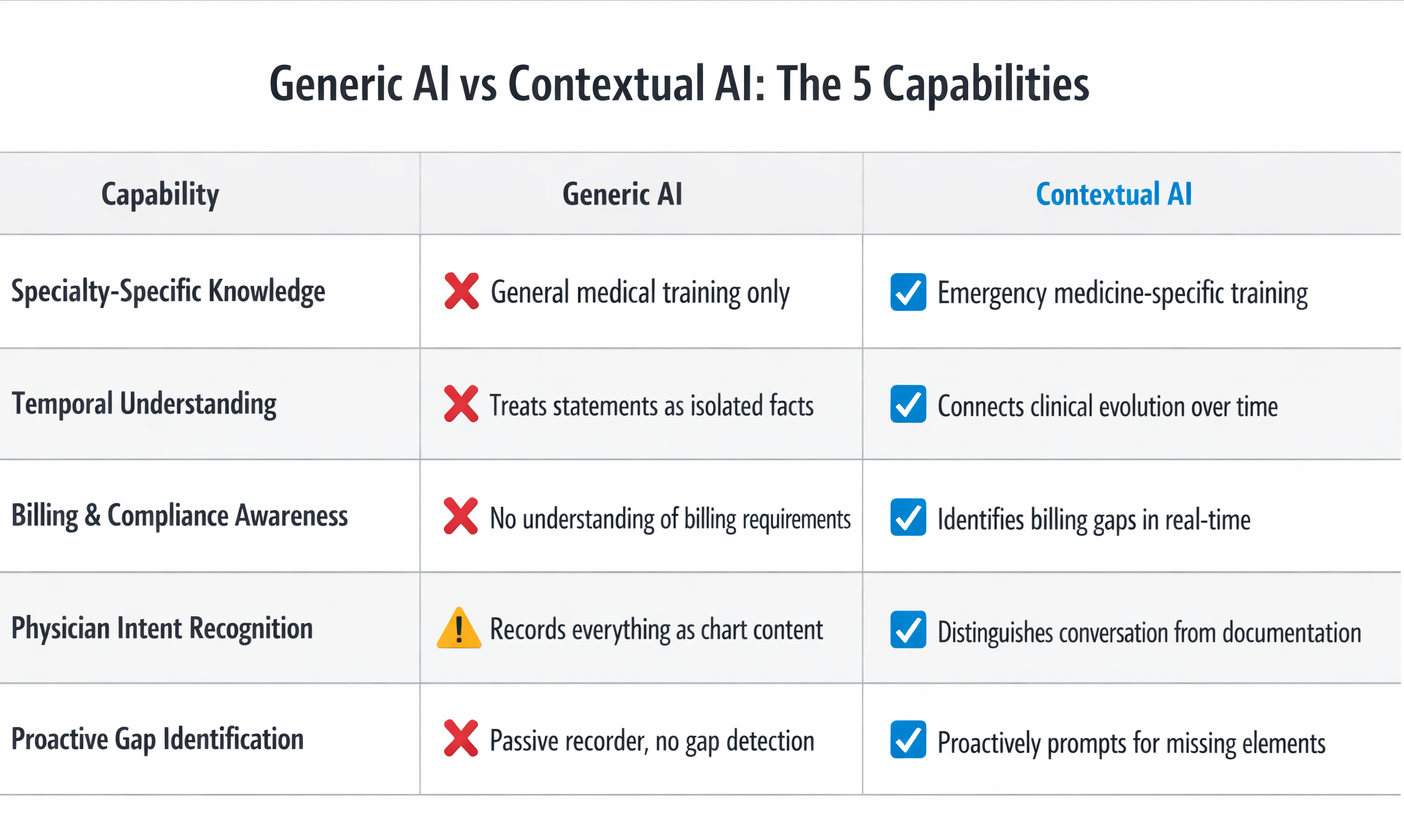

The 5 Capabilities That Define Contextual Understanding

True contextual understanding in AI scribes requires five distinct capabilities working together. Generic AI scribes may have one or two. Next-gen AI scribes trained specifically on emergency medicine have all five.

1. Specialty-Specific Clinical Knowledge

This means the AI is trained exclusively on emergency medicine documentation, not general medical records from multiple specialties. It understands ED-specific presentations, workflows, and billing requirements. It recognizes when emergency physicians use specialty shorthand that carries meaning other specialties might miss.

The training data determines everything. AI is only as good as what it learned from. Generic AI is trained on mixed specialties—primary care visits, surgical notes, specialty clinic documentation. These encounters follow predictable patterns. Primary care: established diagnosis, medication adjustment, follow-up plan. Surgery: procedure performed, findings, post-op care. Emergency medicine doesn't work this way.

Emergency department presentations are undifferentiated—the same symptom can have vastly different causes requiring completely different workups. A patient with chest pain in a cardiology clinic likely has a cardiac issue. A patient with chest pain in an emergency department could be experiencing heart attack, blood clots in the lungs, torn blood vessels, collapsed lung, esophageal tears, muscle strain, anxiety, or a dozen other conditions.

Contextual AI trained on emergency medicine recognizes that emergency physicians must document broad differential diagnosis and the rule-out process. This isn't academic—it's the difference between a claim that pays and one that gets denied. When a chart shows "chest pain" without documenting why emergency evaluation was necessary instead of urgent care or clinic follow-up, insurance companies deny payment.

Generic AI just writes "chest pain" and moves on because in other specialties, that's often sufficient context. In emergency medicine, it's documentation that triggers denials.

The best training data includes thousands of complete ED encounters showing proper documentation of medical necessity, appropriate medical decision-making complexity, complete procedure notes, and critical care time tracking. It includes insurance denial patterns specific to emergency medicine—what gaps trigger denials, what elements must be present to survive audits.

The feedback loop matters tremendously. Contextual AI improves through emergency medicine–specific usage. Every time documentation gets accepted or denied by insurance companies, the AI learns what works and what doesn't. It adapts to individual department patterns over time. Generic AI can't replicate this expertise by adding emergency medicine training after initial development on general medical documentation.

Real-world impact: Specialty-trained AI catches ED-specific documentation gaps that generic AI misses. Studies show generic AI misses 30-40% of context-dependent billing requirements specific to emergency medicine. This results in downcoding—the insurance company pays for a lower level of service than was actually provided—even when the care was completely appropriate and necessary.

2. Temporal Understanding and Clinical Trajectory

This means the AI understands how a patient's condition evolves during the ED visit. It tracks clinical decision-making over time, not just final disposition. It recognizes when an evolving presentation changes what documentation is needed.

Generic AI treats each statement as isolated fact. It doesn't connect initial presentation → workup → reassessment → disposition.

Example: Patient arrives with mild abdominal pain. Labs show elevated lipase. Imaging confirms pancreatitis. Contextual AI recognizes: (1) initial presentation was vague, (2) workup revealed serious diagnosis, (3) medical necessity just got stronger, (4) medical decision-making complexity increased. It prompts physician to document initial differential that justified extensive workup.

Generic AI just records final diagnosis—pancreatitis—without connecting the dots. When insurance reviews the chart, they see "mild abdominal pain" and question why so much testing was done.

Temporal understanding also matters for critical care billing. A physician spends 60 minutes managing diabetic ketoacidosis (DKA)—continuous monitoring, multiple medication adjustments, frequent lab checks. This qualifies as critical care, which pays substantially more than regular ED visits.

Generic AI documents clinical course but doesn't recognize this represents critical care time. Contextual AI recognizes the pattern and prompts: "This case appears to meet critical care criteria. Document start and stop times and total minutes." Revenue protected: $300 to $400.

3. Billing and Compliance Awareness

This means the AI understands what documentation elements are required for specific billing codes. It recognizes when physician statements don't meet CMS (Centers for Medicare & Medicaid Services) or insurance company requirements. It knows when medical necessity needs strengthening to survive payer review.

Generic AI has no training on billing requirements or payer policies. It assumes a complete sentence equals complete documentation. It doesn't recognize when charts are vulnerable to denials or downcoding.

Here's a concrete example. A physician performs a laceration repair and says "stitched up the hand laceration." Generic AI writes "laceration repair performed" and considers the documentation complete.

But procedures aren't billable without complete documentation. Billing requires specific elements: indication (why the procedure was necessary), technique (how it was done), complications (any problems during the procedure), wound characteristics (size, depth, location), and time spent. Without these elements, coders can't submit the procedure for billing. The hospital delivered the care but can't get paid for it.

Contextual AI recognizes the gap immediately. It prompts: "Procedure documentation incomplete. Please document: indication, technique, laceration size, complications if any, and time spent." The physician takes 30 seconds to add these details. Revenue protected: $150 to $300.

This happens constantly in emergency departments. Physicians reduce dislocated shoulders, drain abscesses, remove foreign bodies, repair complex lacerations—all billable procedures. But documentation happens between managing other critically ill patients. It's easy to mention a procedure without formally documenting all required elements.

Research demonstrates that insufficient medical decision-making documentation is a primary driver of downcoding in emergency medicine, where clinical complexity consistently exceeds what appears in charts. Contextual AI addresses this by ensuring complexity is documented, not just performed.

The real-world impact is measurable. Departments using contextual AI see 20-30% more procedures captured compared to generic transcription AI. Critical care documentation improves by 40-50% when the AI prompts for time tracking. Medical necessity denials decrease 40-60% when gaps are caught in real-time rather than discovered weeks later during insurance review.

4.Physician Intent Recognition

This means the AI distinguishes between casual conversation and documentation intent. It understands when the physician is thinking aloud versus stating something for the chart.

Generic AI records everything as if it's meant for the medical record. It can't distinguish "I wonder if this could be appendicitis" from "the diagnosis is appendicitis."

Example: Physician says to nurse: "Could be appendicitis, could be ovarian torsion, could be kidney stone—let's get CT." This is thinking through possibilities, not making definitive statements.

Generic AI might write: "Possible appendicitis, possible ovarian torsion, possible kidney stone." This looks indecisive.

Contextual AI recognizes this is differential diagnosis development and structures it properly: "Differential diagnosis includes appendicitis, ovarian torsion, and nephrolithiasis. CT abdomen/pelvis ordered to differentiate and guide management." This shows appropriate medical decision-making complexity.

5. Proactive Gap Identification

This means the AI identifies what's missing, not just what's present. It recognizes patterns that indicate incomplete documentation.

Generic AI only processes what's said. It has no framework for recognizing when something important hasn't been documented.

Example: Patient presents with diabetes, altered mental status, and abnormal labs. Contextual AI recognizes this pattern likely represents DKA. It prompts: "This presentation suggests DKA management. If critical care was provided, document start and stop times. List specific interventions performed." The physician confirms 55 minutes were spent. Revenue captured: $350.

Another example: Patient gets multiple diagnostic studies—CT scan, ultrasound, multiple lab panels. Contextual AI prompts: "Document the differential diagnosis that justified this workup to establish medical necessity." This prevents insurance from questioning why so many tests were performed.

Real-world impact: Critical care billing captures additional $200-400 per case. Procedure documentation prevents $100-300 loss per undocumented procedure. Medical necessity denials decrease from 8-10% to 3-4%.

Research on AI-assisted clinical documentation confirms that these findings demonstrate measurable improvements in coding accuracy and completeness, with direct impact on revenue capture across emergency department settings.

Quick Reference: What Each Capability Catches

- Specialty Knowledge:

- Identifies when ED-specific documentation requirements aren't met (prevents 30-40% of context-dependent denials)

- Temporal Understanding:

- Recognizes evolving presentations requiring updated documentation (captures $300-400 in critical care billing when time tracked)

- Billing Awareness:

- Flags incomplete procedure documentation and missing medical necessity (20-30% more procedures captured, denials drop 40-60%)

- Intent Recognition:

- Distinguishes thinking aloud from chart statements (properly structures differential diagnosis for MDM complexity)

- Gap Identification:

- Proactively prompts for missing elements before chart closure (prevents $100-400 per case in lost revenue)

How to Evaluate Contextual Understanding in AI Scribes

Most AI scribe vendors claim contextual understanding. Here's how to test whether it's real or just marketing language. Use these five tests during vendor demonstrations:

Evaluation Checklist:

1. Incomplete Statement Test

- What to say:

- "Patient has chest pain, EKG normal, troponin negative, going home."

- What good AI does:

- Flags missing cardiac risk factors, symptom characteristics, differential diagnosis, and medical necessity justification

- What generic AI does:

- Transcribes verbatim and stops

- Why it matters:

- Charts look complete but get denied for insufficient medical necessity

2. Specialty Vocabulary Test

- What to say:

- "Reduced the shoulder, post-reduction films negative."

- What good AI does:

- Recognizes procedure performed, prompts for formal documentation (indication, technique, complications)

- What generic AI does:

- Writes verbatim without recognizing billing implications

- Why it matters:

- Procedure isn't billable without required elements (revenue lost: $300-400)

3. Evolving Presentation Test

- What to say:

- Start with "mild abdominal pain" → add "elevated white count" → finish with "CT shows appendicitis"

- What good AI does:

- Connects timeline, prompts to document initial differential justifying workup

- What generic AI does:

- Treats each statement independently without connecting clinical narrative

- Why it matters:

- Insurance questions why extensive testing was done for "mild" symptoms

4. Critical Care Recognition Test

- What to say:

- "Managed septic shock—started pressors, fluid boluses, vent adjustments, frequent labs, continuous monitoring for an hour."

- What good AI does:

- Recognizes critical care criteria, prompts for time documentation

- What generic AI does:

- Documents excellent clinical care but misses billing opportunity

- Why it matters:

- Revenue lost: $300-400 per case

5. Medical Necessity Test

- What to say:

- "Patient fell yesterday, bumped head, no loss of consciousness, normal exam, going home."

- What good AI does:

- Prompts for mechanism, forces, symptoms, risk factors to establish why ED evaluation was appropriate

- What generic AI does:

- Writes verbatim, leading to denial for insufficient justification

- Why it matters:

- Insurance denies saying patient should have seen regular doctor instead

Why Emergency Medicine Needs Contextual Understanding More Than Other Specialties

Emergency medicine has unique characteristics making contextual understanding essential rather than optional:

Undifferentiated Presentations

- Patients arrive without diagnosis - haven't been pre-screened or sorted

- Same symptom = dozens of possible conditions (chest pain could be heart attack, blood clots, torn vessels, collapsed lung, acid reflux, muscle strain, or many others)

- Documentation must show physician reasoning and rule-out process, not just final answer

- Other specialties see patients with known diagnoses; EM physicians must document broad differential justifying emergency-level evaluation

- Charts showing only final diagnosis get denied for insufficient complexity documentation

Time Pressure and Multi-Patient Management

- Emergency physicians simultaneously manage 3-4 patients at different care stages

- Documentation happens between critical interventions

- Easy to miss elements when context-switching between multiple sick patients

- Contextual AI catches gaps in real-time, prompting while details are fresh

The Data:

- Emergency medicine denial rates: 8-12% (compared to 4-6% for most specialties)

- Contextual AI trained on EM billing requirements: reduces denials to 3-4%

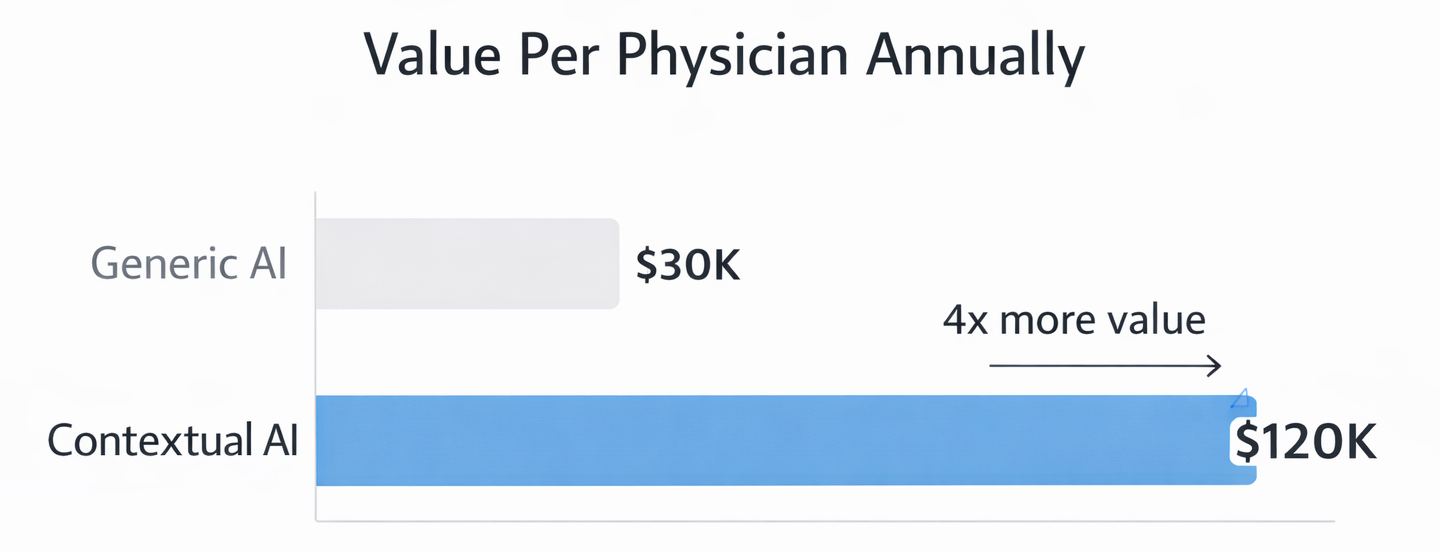

Building the Business Case for Contextual AI

Different stakeholders evaluate AI scribe investments from different perspectives. Here's how contextual AI delivers value to each:

For Finance Leadership:

- What generic AI delivers:

- Time savings only

- What contextual AI delivers:

- Time savings + revenue protection

- Measurable difference:

- 15-25% increase in revenue captured per physician annually

- 12-physician ED example:

- $960K-$1.8M in protected revenue vs. $240K-480K in additional cost = Net benefit: $720K-$1.32M annually

- Payback period:

- 2-3 months

For Clinical Leadership:

- Problem with generic AI:

- Doesn't prevent frustrating queries from HIM days later about incomplete documentation

- How contextual AI helps:

- Catches errors in real-time (30-second prompt during encounter vs. 10-minute query reconstruction)

- Result:

- Charts complete first time, physicians trust the system

- Impact on burnout:

- Emergency physicians cite documentation burden as primary burnout contributor; contextual AI acts as documentation partner

For IT and Implementation Leaders:

- Integration complexity:

- Identical to generic AI (same EHR connection, infrastructure)

- Training time:

- Similar (10-15 minutes)

- The difference:

- Intelligence, not complexity

Real-World Examples of Contextual Understanding in Action

Example 1: The Missed Procedure

A physician evaluates a trauma patient with multiple injuries. During the evaluation, she reduces a dislocated shoulder-puts it back into the socket. She mentions this to the nurse and the patient, discusses post-reduction care, and orders x-rays to confirm the reduction was successful.

The physician is focused on managing the patient's other injuries and coordinating trauma team care. Documentation happens later. She documents the trauma evaluation, the imaging findings, the treatment plan. She mentions in the narrative that the shoulder was reduced.

Generic AI transcribes this perfectly: "Shoulder dislocation reduced, post-reduction films show appropriate position." The clinical note is accurate. But there's no formal procedure documentation.

Without procedure documentation, coding can't bill for the reduction. Required elements are missing: indication for the procedure, technique used (what method of reduction), number of attempts needed, complications if any, confirmation of success. The procedure was performed and benefited the patient, but it's not billable.

Contextual AI recognizes the problem immediately. When the physician mentions reducing the shoulder, the AI flags it: "Procedure identified but not formally documented. Please add procedure note for shoulder reduction including indication, technique, attempts, and complications."

The physician takes 30 seconds to add: "Closed reduction of right shoulder dislocation performed. Indication: traumatic anterior shoulder dislocation. Technique: Modified Stimson method. One attempt required. No complications. Post-reduction films confirm appropriate position."

Revenue captured: $350 that would have been completely lost. The procedure was done either way. Contextual understanding made it billable.

Example 2: The Critical Care Case

A patient arrives in septic shock—severe infection causing dangerously low blood pressure. The physician spends 70 continuous minutes at the bedside starting medications to support blood pressure, giving IV fluids, adjusting the ventilator settings, ordering and reviewing multiple lab tests, and continuously reassessing the patient's response to treatment.

This absolutely meets critical care billing criteria. But the physician is focused on managing the life-threatening condition, not on tracking time. Documentation happens after the patient stabilizes enough to transfer to the intensive care unit.

Generic AI creates an excellent clinical note documenting all the interventions, the clinical reasoning, and the patient's improvement. But it doesn't prompt for critical care time documentation because that's a billing concept, not a clinical concept.

The chart bills as a level 5 emergency visit—the highest regular evaluation and management code. Appropriate given the complexity. But it misses critical care billing which would pay $300 to $400 more for the same encounter.

Contextual AI recognizes the pattern during documentation. Septic shock plus multiple interventions plus extended management equals probable critical care. It prompts: "This case appears to meet critical care criteria based on documented interventions and patient severity. If you provided critical care, document start time, stop time, and total minutes."

The physician confirms: "Critical care start time 14:35, stop time 15:45, total time 70 minutes providing critical care for septic shock with hemodynamic instability requiring continuous intervention and reassessment."

Revenue captured: $380 additional reimbursement for critical care billing versus regular evaluation and management coding. The work was identical either way. Contextual understanding made the difference between appropriate billing and underbilling.

Example 3: The Medical Necessity Gap

A patient with a history of blood clots presents with mild calf pain. The pain is mild, the physical exam is unremarkable, but the physician is concerned given the patient's history and orders an ultrasound to rule out deep vein thrombosis—a potentially dangerous blood clot.

The ultrasound is negative. The patient goes home with instructions to follow up if symptoms worsen. Appropriate care that ruled out a serious condition.

Generic AI documents: "Calf pain. History of DVT. Ultrasound negative. Discharge home." This accurately captures what happened clinically.

Three weeks later, insurance denies the claim. Denial reason: "Medical necessity not established. Mild symptoms with negative findings could have been evaluated in urgent care or outpatient setting. Emergency department–level care not justified."

The hospital appeals, explaining the history of blood clots made immediate ultrasound necessary to rule out dangerous recurrence. Sometimes the appeal succeeds. Often it doesn't. Either way, it requires staff time to fight for payment that should have been straightforward.

Contextual AI prevents this entirely. When documenting the encounter, it recognizes a medical necessity gap. Mild symptom plus expensive imaging plus discharge home equals vulnerable claim unless risk factors are clearly documented.

It prompts: "Document risk factors and clinical reasoning for ED-level evaluation to establish medical necessity. Why was immediate ultrasound necessary rather than outpatient follow-up?"

The physician adds: "Given patient's history of prior DVT and current anticoagulation, new leg pain required immediate evaluation to rule out recurrent thrombosis. Symptoms alone were mild, but high-risk history necessitated urgent imaging that would not be available in urgent care or outpatient settings. Negative ultrasound provides reassurance that immediate anticoagulation adjustment is not needed."

The claim processes without issue. Medical necessity clearly established. No denial. No appeal. No wasted staff time. Revenue protected through better documentation of clinical reasoning that was always present but might not have been explicitly stated.

Conclusion

The difference between transcription and contextualization determines whether AI scribes save time or protect revenue. Surface transcription captures words accurately but misses clinical meaning. Contextual understanding interprets what physicians mean, recognizes gaps, and prompts for missing elements before they cause problems.

Emergency medicine requires contextual understanding more than any other specialty. Patients arrive without diagnoses. Presentations could be dozens of conditions. Documentation must show physician thinking. Time pressure makes gaps inevitable. Billing requirements are complex and specialty-specific.

Five capabilities define true contextual AI: specialty-specific clinical knowledge, temporal understanding, billing awareness, physician intent recognition, and proactive gap identification. Generic AI typically has one or two. Next-gen AI trained on emergency medicine has all five working together.

The evaluation framework provides concrete tests to separate real contextual understanding from marketing claims. Test how AI handles incomplete statements, specialty vocabulary, evolving presentations, critical care recognition, and medical necessity documentation. These tests reveal whether AI truly understands emergency medicine or just transcribes accurately.

The business case favors contextual AI across stakeholder groups. Finance sees 15-25% more revenue captured per physician with minimal additional cost. Clinical leadership sees reduced physician burden and better documentation quality. IT sees identical implementation complexity with substantially more value.

Evaluate your current AI scribe against the five capabilities. Test it with the evaluation framework. If it fails multiple tests, you're paying for sophisticated transcription, not contextual understanding. In emergency medicine, that difference costs real money and creates unnecessary physician frustration.

The next generation of AI scribes understands what physicians mean, not just what they say. That understanding protects revenue, improves documentation quality, and supports physicians during the chaos of emergency medicine practice.

About DocAssistant

DocAssistant develops HIPAA-compliant AI documentation and medical coding solutions purpose-built for emergency medicine. Founded by practicing emergency physicians and headquartered in San Diego, California, DocAssistant combines automated clinical documentation with specialty-specific AI to reduce documentation burden, improve ICD-10 coding accuracy, and increase revenue capture for physicians, billing teams, and healthcare organizations. The company’s AI coding tool and AI scribe platform are designed to help medical billing teams, revenue cycle professionals, and clinicians work faster and document more completely. More information is available at www.docassistant.ai.

Media Contact:

DocAssistant Team

+1 619-344-0849